Sign up to save your library

With an OverDrive account, you can save your favorite libraries for at-a-glance information about availability. Find out more about OverDrive accounts.

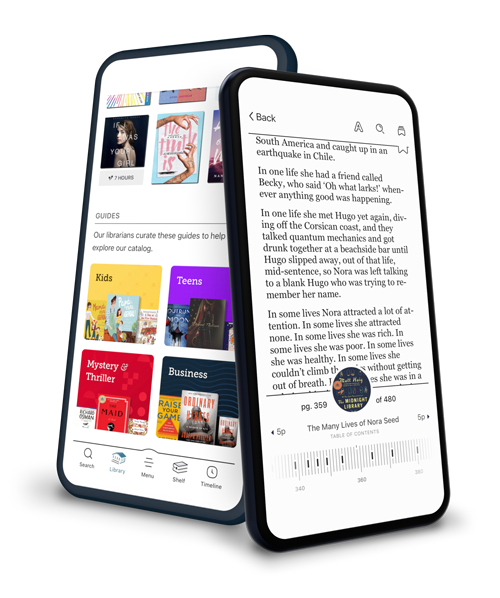

Find this title in Libby, the library reading app by OverDrive.

Search for a digital library with this title

Title found at these libraries:

| Library Name | Distance |

|---|---|

| Loading... |

Psychology and philosophy have long studied the nature and role of explanation. More recently, artificial intelligence research has developed promising theories of how explanation facilitates learning and generalization. By using explanations to guide learning, explanation-based methods allow reliable learning of new concepts in complex situations, often from observing a single example.

The author of this volume, however, argues that explanation-based learning research has neglected key issues in explanation construction and evaluation. By examining the issues in the context of a story understanding system that explains novel events in news stories, the author shows that the standard assumptions do not apply to complex real-world domains. An alternative theory is presented, one that demonstrates that context — involving both explainer beliefs and goals — is crucial in deciding an explanation's goodness and that a theory of the possible contexts can be used to determine which explanations are appropriate. This important view is demonstrated with examples of the performance of ACCEPTER, a computer system for story understanding, anomaly detection, and explanation evaluation.