Transformers in Deep Learning Architecture

ebook ∣ Definitive Reference for Developers and Engineers

By Richard Johnson

Sign up to save your library

With an OverDrive account, you can save your favorite libraries for at-a-glance information about availability. Find out more about OverDrive accounts.

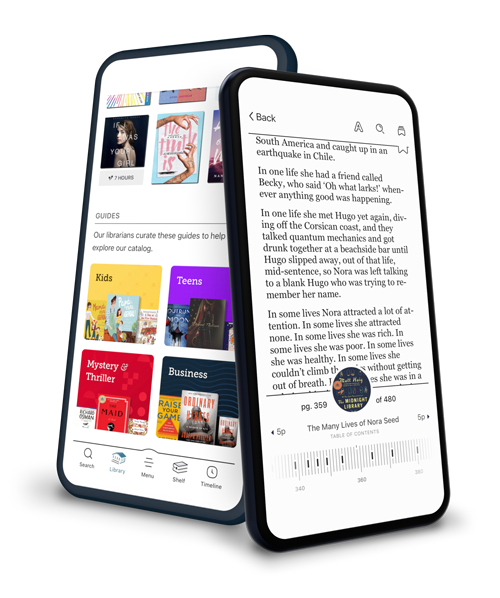

Find this title in Libby, the library reading app by OverDrive.

Search for a digital library with this title

Title found at these libraries:

| Library Name | Distance |

|---|---|

| Loading... |

"Transformers in Deep Learning Architecture"

"Transformers in Deep Learning Architecture" presents a comprehensive and rigorous exploration of the transformer paradigm—the foundational architecture that has revolutionized modern artificial intelligence. The book opens by situating transformers within the historical context of neural sequence models, methodically tracing their evolution from recurrent neural networks to the self-attention mechanisms that address their predecessor's limitations. Early chapters lay a strong mathematical and conceptual foundation, introducing key terminology, theoretical principles, and detailed comparisons with alternative architectures to prepare readers for a deep technical dive.

At its core, the book delivers an in-depth analysis of the architectural details and operational intricacies that underpin transformer models. Subsequent chapters dissect the encoder-decoder framework, decompose self-attention and multi-head attention mechanisms, and discuss design choices such as positional encodings, feedforward networks, normalization strategies, and scaling laws. Readers also encounter a nuanced treatment of advanced attention variants—including efficient, sparse, and cross-modal extensions—along with proven paradigms for pretraining, transfer learning, and domain adaptation. Rich case studies illustrate the extraordinary performance of transformers in natural language processing, vision, audio, and multimodal tasks, highlighting both established applications and emerging frontiers.

Beyond technical mastery, the book addresses the practical dimensions and responsible deployment of large transformer models. It guides practitioners through scalable training, distributed modernization, and infrastructure optimization, while confronting contemporary challenges in interpretability, robustness, ethics, and privacy. The final chapters forecast the transformative future of the field with discussions on long-context modeling, symbolic integration, neuromorphic and quantum-inspired approaches, and the profound societal implications of widespread transformer adoption. Altogether, this volume stands as both an authoritative reference and a visionary roadmap for researchers and engineers working at the cutting edge of deep learning.